Security by Design Decisions

Integrating security decisions into the engineering workflow of automated plants

You have certainly heard of security by design before; it has become a buzzword. As part of the IDEAS research project, we are developing a concept for integrating security decisions as early as possible — i.e. “by design” — into the engineering of automated systems. The concept is called “Automation Security by Design Decisions”.

Before we get started, however, we need to clarify two questions: First, why everyone is so keen on security by design. Second, how we actually define security by design.

Why do we even need security by design?

To make security better imaginable, let’s imagine that the purpose of security is to prevent water from leaking out of a pipe. We call the discipline “seal engineering” and assume it is new.

Now imagine if pipes were engineered without seal engineers, and the “sealers” — like the security engineers at the moment — were only brought in when the pipe was already finished, perhaps even in operation.

What else can they do then? Sure, just put up a sign: Attention, pipe leaking. At least then people know about it and can walk around the puddle instead of getting their feet wet.

This is unsatisfactory because with many puddles, everything is eventually under water. So, next try: bucket under the leak.

Also unsatisfactory, because the pipe has a purpose: to transport water from A to B. And B is not the bucket… So, next try: tape over the leaks.

Too bad that the sealing engineers were not asked when selecting the material, and the pipe material is super water-repellent. Unfortunately, also plaster-repellent…does not stick very well. And actually, it would be much better to avoid water leaking through holes in the first place. Unfortunately, the pipe design can now no longer be changed, but after all there is sealing liquid — familiar from the car or bicycle tire — which can seal leaks.

Obviously, you have to remember to add the liquid every time you fill the tube. Phew, to make sure no one forgets, we’d better put together a detailed manual for the pipe users.

What do we see? What was originally an elegant, beautiful, well-designed tube has now become very ugly and awkward to use. And that’s exactly what we’re used to in security:

- We try to prevent users from clicking on malicious links with “warning signs” (awareness training).

- We use “buckets” (antivirus programs) to try and catch malware before it is executed.

- We try to close vulnerabilities with “patches” (software updates).

- We try to make the use of the systems safer with “sealing liquid” and “manuals” (user policies).

This might sound familiar: security is nothing but trouble, making products difficult to use. This is often caused by the fact that security is always the very last thing to be considered. If the sealing engineers had been involved right from the beginning, they could have had a say in the pipe material, the wall thickness, and the design of the flanges. This would have resulted in a beautiful pipe that is “sealed by design,” even if you can’t tell by looking at it.

Early security decisions are better than late decisions, and leveraging inherent system capabilities is better than workarounds added afterwards.

This also applies to security in automation: “Security by design” makes systems secure in a more efficient way by addressing security early in the engineering process. But no one knows how this is supposed to work in practice, partly because a few more disciplines may be involved in the design automated systems than in the design of a pipe.

Definition: Security by Design

Now for the promised definition. We need three aspects for defining security by design.

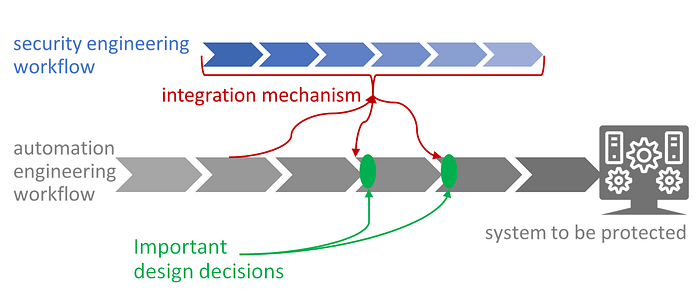

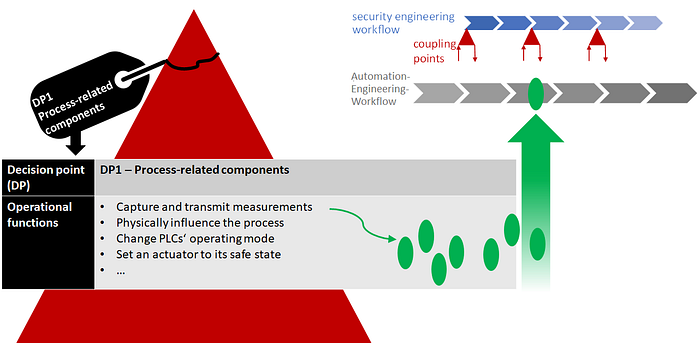

In gray: The automation engineering workflow used to design the system to be protected (for example, consisting of basic engineering, detail engineering, etc….).

In blue: A security engineering workflow that is used to make security decisions.

In red: An integration mechanism that integrates the security decisions into the automation engineering workflow early enough to influence important design decisions (green).

This leads to the following definition:

Security by Design is the early enough integration of security decisions into an existing engineering workflow so that important design decisions can still be influenced.

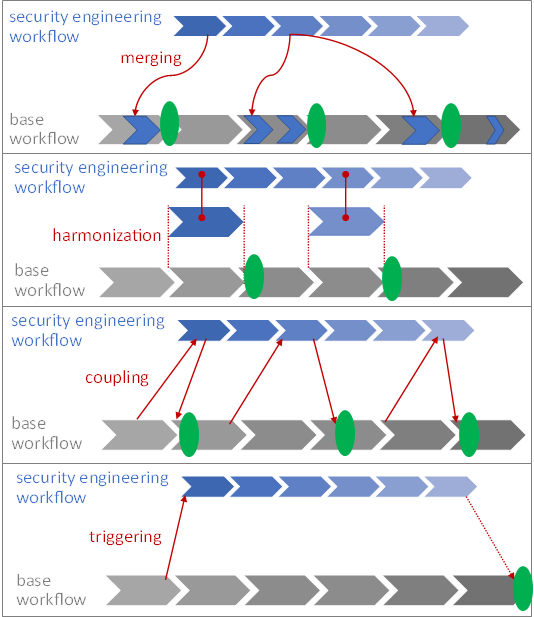

Existing approaches to security by design can be easily distinguished by their integration mechanisms:

- The integration mechanism “merging” means that the base workflow is modified to include security engineering.

Examples from software engineering are Microsoft’s „Security Development Lifecycle“ or — more recent — DevSecOps. - Harmonization aims at making the security engineering workflow as similar as possible to the base workflow so that the two can be easily integrated.

Examples can be found in model-based systems engineering: For modelling with UML there is the security extension “UMLsec”, for SysML, there’s SysMLsec”. - Coupling leaves both workflows unchanged, but defines fixed coupling points in the base workflow. At these coupling points, information is fed into security engineering and results from security engineering flow back into the base workflow — and this before the important design decisions (the green points) are made:

Examples are found in the area of security for safety. Both IEC TR 63069:2019 and ISA TR84.00.09 Ed3 (still in draft state) build upon this approach of „co-engineering”. - Triggering is a special case coupling with just a single coupling point. Once the base workflow arrives at a certain point, the security engineering workflow is triggered based on information available at this point.

Examples are once again in the area of security for safety (Security PHA), but also in critical infrastructure security: INL’s Consequence-driven, Cyber-informed Engineering (CCE).

All these approaches have one thing in common: they only work if I know what the engineering workflow looks like. They are therefore dependent on that base workflow. Methods in which security merges completely with the base workflow, of course, more so than those in which only a single trigger point is defined in the base workflow.

However, this is also a problem: the more Security by Design is dependent on the base workflow, the more difficult it is to transfer the method to other workflows.

How many companies do you know that use the same engineering workflow for their automation systems? Even worse, how many projects within the same company do you know that use the same engineering workflow?

The base workflow is very different depending on the industry, region, company, project… And the variations tend to increase due to more individual, modularized plants and Industry 4.0. If I then have to redefine how security by design works every time, security by design will never take root in automation.

Security by design must succeed regardless of the type of automation engineering workflow.

Security by Design Decisions —introduction to the concept

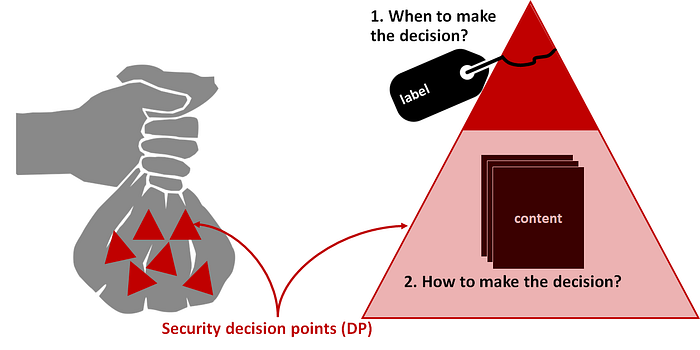

NNow that we have defined Security by Design and the problem is clearer, I would like to introduce you to a concept we have christened “Security by Design Decisions”. It does not focus on a process or phases, but on decisions, or more precisely, decision points. You will see in a moment why this is important.

You can imagine the concept as a bag of red triangles. Each red triangle is a decision point.

The concept consists of two parts:

- In the first part, we only look at the decision point from the outside, at its “label”, and answer the question WHEN we make a security decision.

- In the second part, we make the decision point transparent, look inside and answer the question of HOW we make the security decision.

1. When to make a security decision

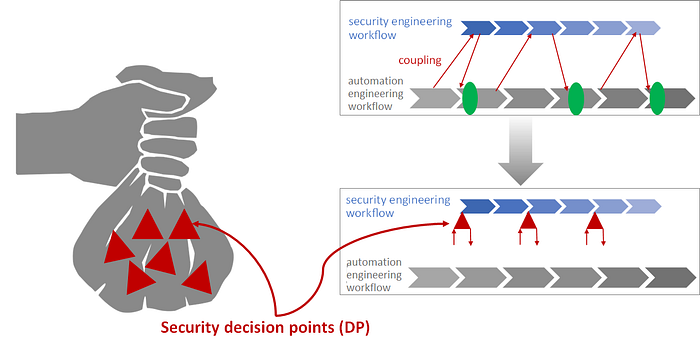

Remember the “coupling” integration mechanism, where both workflows continue to function independently? The only dependency on the base workflow is that you have to define fixed “hook-in points” for the security decisions. In our concept, we now detach these hook-in points and instead define so-called decision points — you can see them as red triangles in the graphic — which contain all the necessary information to “hook” a security decision into the right place in any base workflow and then make it.

Thus, for our security-by-design decision concept, we do not define a new workflow at all and do not even expect any special properties of the existing workflow — we only define decisions to be made. The associated decision point contains all the information for coupling the decision to any base workflow and finally for making the decision.

The question where a decision point must be hooked, i.e. when a security decision must be made at the latest, can be answered with the information on the “label” of the decision point.

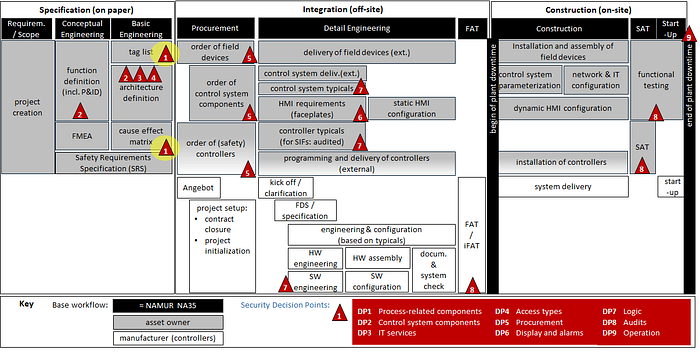

We look at this using the exemplary decision point “DP1 — process-related components”. It is one of nine decision points that we identified in an analysis of real engineering workflows with our application partners INEOS and HIMA.

The label contains a list of operational functions that are potentially affected by the security decision. For the process-related components, this would be, for example, the capturing and transmission of measurements, the physical influencing of the process or the change of a PLC’s operating mode.

You can think of these operational functions as the green dots we used above to illustrate important design decisions. The green dots help anchor the security decisions in the automation engineering workflow. At the green dots, when it is determined how the operational functions will be implemented, the security decisions affecting these operational functions also need to have been made.

Here is an example how all decision points can be integrated into a practical automation engineering workflow. We are again looking at only the first decision point DP1 (highlighted in yellow): In our example, the relevant design decisions are made as part of the tag list and cause-effect matrix creation.

Now we have clarified how to place the security decision points in your individual engineering workflow and thus assemble your own security-by-design workflow.

2. How to make a security decision

The far more interesting question is still open: what actually is a security decision and how do you make it?

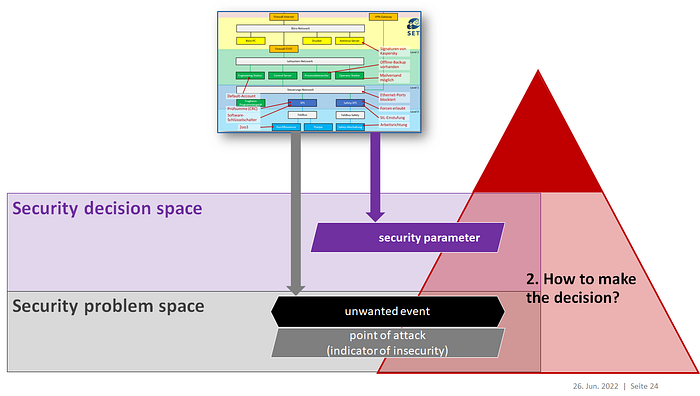

Again, the decision points will help you; but this time, looking at the label is not enough; we need to look inside a decision point.

But before we do that, let’s try to understand what a security decision actually is. Formally: The definition of security requirements and solutions that implement these requirements. And practically?

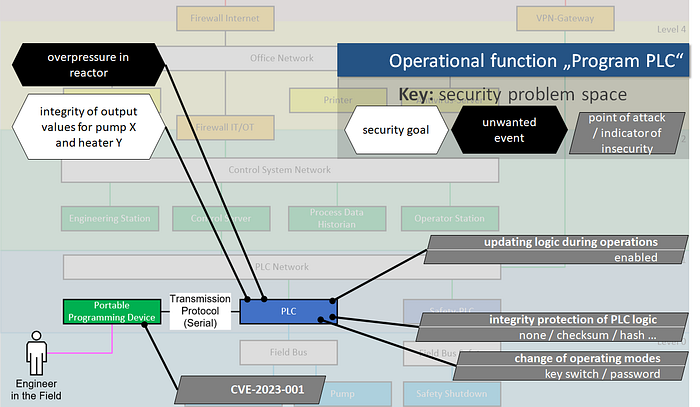

Let’s take a look at an exemplary scope. Here you can see an example OT network, with the field and control level at the bottom in light and dark blue, the control level above in green, and then — separated by a firewall, as it should be — an office network in yellow. And in red we have examples of what security-relevant decisions could look like. We see: Security decisions do not always appear to be “security” at first glance. Also, there is not one big security decision. Rather, security decisions are a collection of small decisions scattered across different disciplines.

This means that even if you have no security-by-design concept at all, you are still making security decisions all the time.

It is impossible NOT to make security decisions. The key is to make them visible.

Security by Design must therefore help you to render the many invisible security decisions visible and to turn security decisionmaking into a conscious process.

And that is exactly what the content of each decision point is about: making the security-relevant information from the automation engineering workflow visible in order to enable you to make conscious security decisions on this basis.

For this purpose, the decision point contains information grouped into a security decision space (purple) and a security problem space (gray).

The decision space contains security parameters, i.e. the collection of small decisions that potentially affect the security of your system — e.g. how you change PLC operating modes.

The problem space contains, on the one hand, undesirable events known from the base workflow, e.g. overpressure in the boiler, and on the other hand possible points of attack which can lead to these undesired effects. We also call these points of attack “Indicators of Insecurity” and will see why in a moment in the example.

Back to the example: We need to bring order to the unstructured hodgepodge of potentially security-relevant information, and we have already learned the first concept for ordering: it is the operational functions.

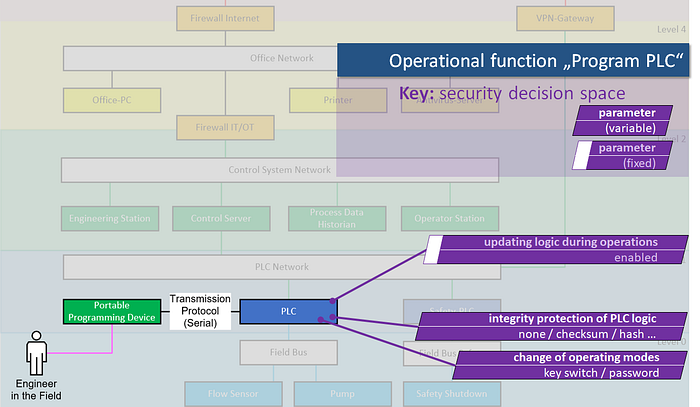

While on the label of the decision point there were only titles of the operating functions, now you can see one in detail: The function “Program PLC”. For simplicity, let’s assume there is only one way to program the PLC in the example, and that is locally with a portable programming device. The operational function contains all the information about how programming works: Which human role can do it, which programming device it employs, which transmission protocol is spoken between the programming device and the PLC.

The operational functions are a good filter for security-relevant information: In order not to get lost in the jumble of information, we only look at the information that is relevant in the context of the operational function currently under consideration. The function thus gives the security-relevant information its operational context. Also, it models two essential aspects for security assessments: The functions’ intention and the humans involved.

Now we look into the security decision space, which makes the security decisions visible as security parameters: Is updating logic allowed during PLC operation? Does the PLC have integrity protection for its logic? How can you switch between operating modes?

Not all security parameters can be decided by security in every case. Functional requirements of other trades or simply restricted technical capabilities can lead to a situation where there is no longer any choice for the security experts. In our example, we assume it is already clear that the program update must be permitted during operation; this parameter is therefore fixed.

Now that the security parameters have been clarified, a different perspective on the function can be taken: The security problem space can be entered.

Anything that is a potential point of attack is interesting here. Notably, a point of attack is not the same as a vulnerability. Points of attacks include vulnerabilities (like a programming device vulnerability in this example), but it can also be intentional design decisions that are problematic from a security point of view. Points of attack can be regarded as “indicators of insecurity”, explicitly tagging any “insecure by design” features, even if they are not in fact vulnerabilities.

You could now build attack scenarios from the attack points, but we will settle for the result of an attack here: an unwanted event. Such an event is also information that is received from the automation engineering workflow. An example for the operational function “PLC programming” or for the PLC and its associated actuators: The hazardous situation “overpressure in the boiler”.

One could now imagine a security target to avoid this. Example: The integrity of the PLC output values for a pump X and a heater Y.

Back to the decision space. We take the security goal from the problem space with us. Of our remaining variable parameters: Which ones do we need to set in order to ensure the integrity of the PLC output values for the pump and the heater?

The obvious choice in our simplified example is making the integrity protection mecanism as strong as possible — so we fix the value of this security parameter to the “hash” option. Of course, one could also place high hurdles on the change of operating modes and require a physical key switch.

Outlook: A Tool for Security by Design Decisions

Now you know the main cornerstones of our Security by Design Decisions concept. Could you get started now?

Probably not.

Because you’re probably wondering who’s going to supply you with all those purple security parameters and who the hell is going to paint and maintain all those pretty pictures. And rightly so!

Therefore, we are currently working on a demonstrator for a software tool in our research project together with INEOS and HIMA.

The front end guides through the decision point process, helps with placing the decision point in the existing process, and then security decisionmaking based on diagrams like the ones you just saw. Because here’s the thing: We know the diagrams are important to help engineers on tight time budgets quickly grasp their security decision space. (You really don’t want to edit the security parameters as an endless Excel list!).

The multitude of relevant information for making security decisions is best understood by humans through diagrams.

The backend includes a data model so that the decision space and problem space diagrams can be generated as different views of the data at any time. It also makes the database efficiently maintainable and exportable. This data model — based on AutomationML — is created by our research partner, Pforzheim University.

The third feature of the planned demonstrator are libraries. After all, a major game changer for security decision points is that they make security parameters visible — but these security parameters first have to be identified. Security parameters can well be re-used, so not every engineer needs to find them herself. Libraries per compoenent, per operational function, per vendor are all possible. Besides: With a good library as an example, learning a new method is much easier.

Even if you’ll forget everything you’ve read about Security by Design for automation systems so far in this article, I hope that you take these three key messages with you:

- Security by design must be achievable regardless of the type of automation engineering workflow.

- It is impossible not to make security decisions. Security by design needs to make them visible.

- The multitude of relevant information for making security decisions is best understood by humans if presented visually.

I hope that from now on, when someone asks you about Security by Design, you’ll say “sure, it’s really just a bag of red triangles!”.

This work is funded by the German Federal Ministry of Education and Research as part of the research project “IDEAS”. The corresponding scientific paper has been published in the proceedings of the AUTOMATION Congress (in German), which took place in Baden-Baden, Germany, June 28–29, 2022. An English paper is in press.